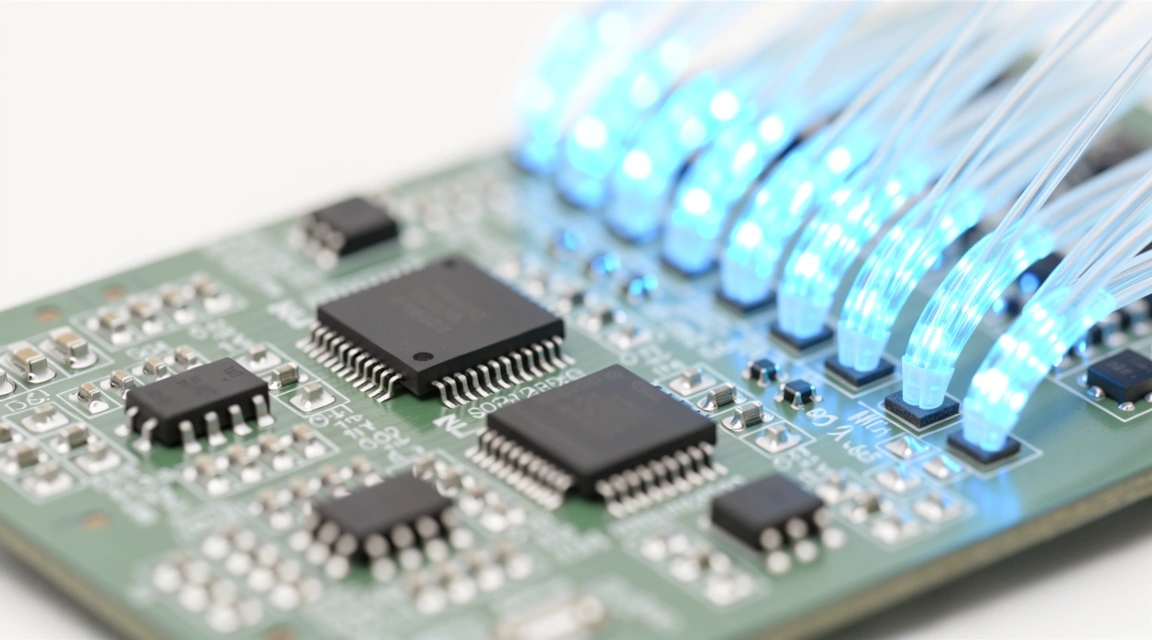

Hardening the Inference Layer.

Protecting Generative AI requires more than a standard firewall. We focus on the specialized technical architecture needed to prevent model inversion, poisoning, and prompt injection at the source.

Live Status

Defense frameworks updated for Q2 2026 threat vectors.

Differential Privacy in AI

When training models on sensitive Malaysian enterprise data, the risk of data leakage via membership inference attacks is high. We implement mathematical noise injection—Differential Privacy—ensuring individual data points cannot be reconstructed from model outputs. This allows for robust training while maintaining strict compliance with regional data protection standards.

Real-time Model Monitoring

Our technical stack implements continuous drift detection and anomaly scoring for model weights and activation patterns.

- Input Sanitization Layers

- Output Filtering & Hallucination Checks

- Token-level Rate Limiting

Anti-Poisoning Protocols

Securing the data supply chain is paramount. We deploy automated hashing and provenance tracking to ensure training data integrity from ingestion to training.

Advanced AI Encryption

Data at rest is vulnerable; data in use by LLMs is often exposed. Our strategy includes:

Homomorphic Encryption Processing encrypted data without ever needing to decrypt it during the inference cycle.

TEE (Trusted Execution Environments) Utilizing secure hardware enclaves to isolate model computations from the host OS.

Implementing Red-Teaming as a technical baseline.

Defenses are theoretical until tested. Mrs. Varo Digital advocates for automated adversarial testing pipelines. We don't just set policies; we build the technical benchmarks that stress-test your AI systems against prompt injection and jailbreaking attempts before they reach production.

Ready to secure your ML pipeline?

Technical defense is a moving target. In Malaysia's rapidly evolving AI landscape, staying ahead of malicious actors requires a proactive stance on model security and architectural integrity.

Direct Consultation for Enterprises

Technical Control Inventory

Training Phase

Data scrubbing, differential privacy, and outlier detection in training sets.

Deployment Phase

Container hardening, API gateway authentication, and tokenization.

Inference Phase

Adversarial noise filters, content moderation, and PII masking.

Observability

Weight drift logging, latent space mapping, and integrity alerts.